Generative AI is reshaping creativity, and at the heart of this shift are diffusion models — a powerful new class of models that enable entirely new workflows in art, design and animation. From turning a text prompt into a dynamic image to animating a sequence of frames guided by motion cues, diffusion models are empowering creators in striking ways. In this post we’ll explore how they work, why they’re transformative and how they’re being applied in next-gen creative workflows.

What Are Diffusion Models?

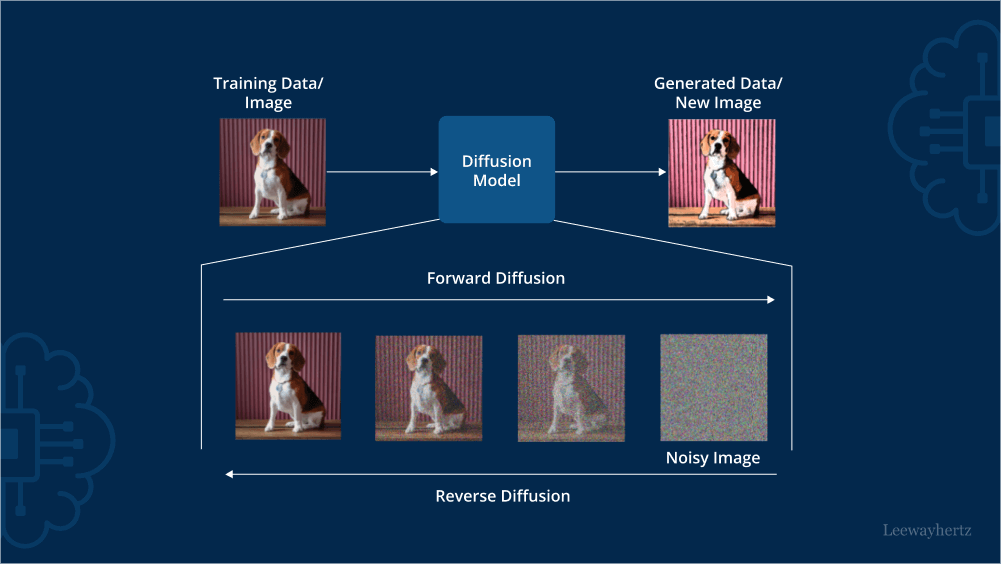

In simple terms, a diffusion model generates new data (images, sound, video) by starting from noise and gradually refining that noise into a coherent output. The process:

Forward process: noise is added to real data (during training) until the data becomes pure noise.

Reverse (denoising) process: at generation time, the model gradually removes noise steps to reconstruct or create new data based on a condition (such as a text prompt).

Because of this step-by-step process, diffusion models have become particularly adept at high-quality generation and rich conditioning.

Why They Are Transformative for Art & Animation

Unprecedented creative freedom: You can provide a simple description like “a vibrant futuristic cityscape at dusk in a cinematic style” and get a visually compelling image.

Versatility of conditioning: These models can take text, images, sketches, style references — enabling diverse workflows: text-to-image, image-to-image, style transfer, even animation.

Animation & motion: Emerging research is pushing diffusion models into motion generation — coherent sequences of frames with believable temporal dynamics.

Democratising creative tools: Artists, designers, and smaller studios can now generate mood boards, preliminary animation, conceptual art rapidly — lowering cost and time.

Customisation: Fine-tune styles or embed company/brand aesthetics — so the output aligns with your design language or character set.

Applications in Next-Gen Creative Workflows

Text to image: Create illustrations, concept art, marketing visuals, architectural renders simply from prompts.

Sketch to finished piece / style transfer: For example turning a rough sketch into polished art, or applying a specific artistic style.

Animation and motion graphics: Use diffusion techniques that handle motion consistency, key-frame conditioning, and frame interpolation to generate animations or short video sequences.

3D and multi-modal content: The frontier includes volumetric generation, 3D models, text-to-video or image sequence generation — enabling full visual experiences.

Brand asset generation: Studios can use diffusion pipelines to build characters, backgrounds, textures, promotional visuals aligned with brand identity, faster than traditional manual design.

How to Get Started (At a High Level)

Choose a diffusion framework or tool (many open-source and commercial options exist).

Develop your prompt craft: effective generation often hinges on how well you describe subject, style, lighting, composition, mood.

Prepare reference materials if required: sketches, style sheets, mood boards.

Iterate: generate variants, select the best, refine or regenerate.

For animation: plan for consistency — characters, scene, lighting must remain stable across frames; consider techniques that maintain temporal coherence.

Post-processing: upscaling, editing, compositing — the model output is often part of a broader pipeline.

Considerations and Challenges

Generation can be computationally heavy, especially for high resolution or animation with many frames.

For motion/animation, maintaining continuity, smooth transitions and style consistency remains challenging.

Ethical and copyright concerns: training data, derivative styles, ownership of generated content can be complex.

Prompt engineering: although easier than code, crafting good prompts still takes skill and iteration.

Real-time generation or runtime constraints: diffusion models may have latency that makes live/interactive workflows harder.

Conclusion

Diffusion models represent a paradigm shift in how visual content is created and animated. They bring power, flexibility and speed to creators, enabling workflows that were previously expensive or impossible. Whether you’re an artist exploring new styles, a design studio accelerating production, or a brand building visual assets at scale — these models offer a new generative toolkit. Embrace the iterative creative loops, experiment with prompts and conditions, and you’ll find yourself at the frontier of next-gen AI art and animation.